pytorch识别图片之004 卷积模型训练、宽度优化、正则化、dropout、normalization、增加模型深度、保存

AI

# 全连接层:

# - 把图片展平,丢失空间信息

# - 参数数量:3072 * 512 ≈ 157万

#

# 卷积层:

# - 保持图片的2D结构

# - 参数数量:16 * 3 * 3 * 3 = 432个参数(少得多!)

# - 共享权重,平移不变性

# """

import ssl

ssl._create_default_https_context = ssl._create_unverified_context

from matplotlib import pyplot as plt

import numpy as np

import torch

#设置张量打印格式(显示更简洁)

torch.set_printoptions(edgeitems=2,linewidth=75)

#固定随机种子,确保结果可重现

torch.manual_seed(123)

from torchvision import datasets

data_path = '/Volumes/c/work/aigc'

class_names= ['airplane','automobile','bird','cat','deer','dog','frog','horse','ship','truck']

from torchvision import transforms

transforms_cifar10 = datasets.CIFAR10(root=data_path,train=True,download=True,transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.4915,0.4823,0.4468),(0.2470,0.2435,0.2616))]))

transforms_cifar10_val = datasets.CIFAR10(root=data_path,train=False,download=True,transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.4915,0.4823,0.4468),(0.2470,0.2435,0.2616))]))

import torch.nn as nn

softmax = nn.Softmax(dim=1) #放大大的 缩小小的

label_map={0:0,2:1}

class_names=['airplane','bird']

cifar2= [(img,label_map[label]) for img,label in transforms_cifar10 if label in [0,2]]

cifar2_val= [(img,label_map[label]) for img,label in transforms_cifar10_val if label in [0,2]]

# 3 通道

# out_channels_medium = 16 # 适中,平衡性能和精度

# kernel_size = 3 # 3x3的窗口卷积核大小 是深度学习中最常用的选择

conv = nn.Conv2d(3,16,3,padding=1)

print(conv)

img,_=cifar2[0]

out = conv(img.unsqueeze(0))

print(img.unsqueeze(0).shape,out.shape)

# model = nn.Sequential( # 顺序容器,一层接一层

# # 第一组卷积

# nn.Conv2d(3, 16, 3, padding=1), # 卷积层1

# nn.Tanh(), # 激活函数1

# nn.MaxPool2d(2), # 池化层1

#

# # 第二组卷积

# nn.Conv2d(16, 8, 3, padding=1), # 卷积层2

# nn.Tanh(), # 激活函数2

# nn.MaxPool2d(2), # 池化层2

#

# # 全连接层

# nn.Linear(8 * 8 * 8, 32), # 全连接层1

# nn.Tanh(), # 激活函数3

# nn.Linear(32, 2), # 输出层

# )

# numel_list = [p.numel() for p in model.parameters()]

# print(sum(numel_list))

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3, 16, 3, padding=1)

self.conv2 = nn.Conv2d(16, 8, 3, padding=1)

self.fc1 = nn.Linear(8 * 8 * 8, 32)

self.fc2 = nn.Linear(32, 2)

def forward(self, x):

out = F.max_pool2d(torch.tanh(self.conv1(x)), 2)

out = F.max_pool2d(torch.tanh(self.conv2(out)), 2)

out = out.view(-1, 8 * 8 * 8)

out = torch.tanh(self.fc1(out))

out = self.fc2(out)

return out

def training_loop(n_epoch,optimizer,model,loss_fn,train_loader):

for epoch in range(1,n_epoch+1):

loss_train=0

for imgs,labels in train_loader:

outputs = model(imgs)

loss = loss_fn(outputs, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

loss_train += loss.item()

if epoch % 10 == 0:

print('epoch:',epoch,'loss:',loss_train/len(train_loader))

train_loader = torch.utils.data.DataLoader(cifar2, batch_size=64, shuffle=True)

model=Net()

optimizer=torch.optim.SGD(model.parameters(),lr=0.001)

loss_fn = nn.CrossEntropyLoss()

training_loop(n_epoch=100,optimizer=optimizer,model=model,loss_fn=loss_fn,train_loader=train_loader)

val_loader = torch.utils.data.DataLoader(cifar2_val, batch_size=64, shuffle=True)

def validate(model,train_loader,val_loader):

for name,loader in [("train",train_loader),('val',val_loader)]:

correct = 0

total = 0

with torch.no_grad():

for imgs,labels in loader:

outputs = model(imgs)

_, predicted = torch.max(outputs, dim=1)

total += labels.shape[0]

correct+=int((predicted==labels).sum())

print(name,correct/total)

validate(model,train_loader,val_loader)

# torch.save(model.state_dict(),'/Volumes/c/work/aigc/cifar10.pth')

#

#

# loaded_model=Net()

# loaded_model_use = loaded_model.load_state_dict(torch.load('/Volumes/c/work/aigc/cifar10.pth'))

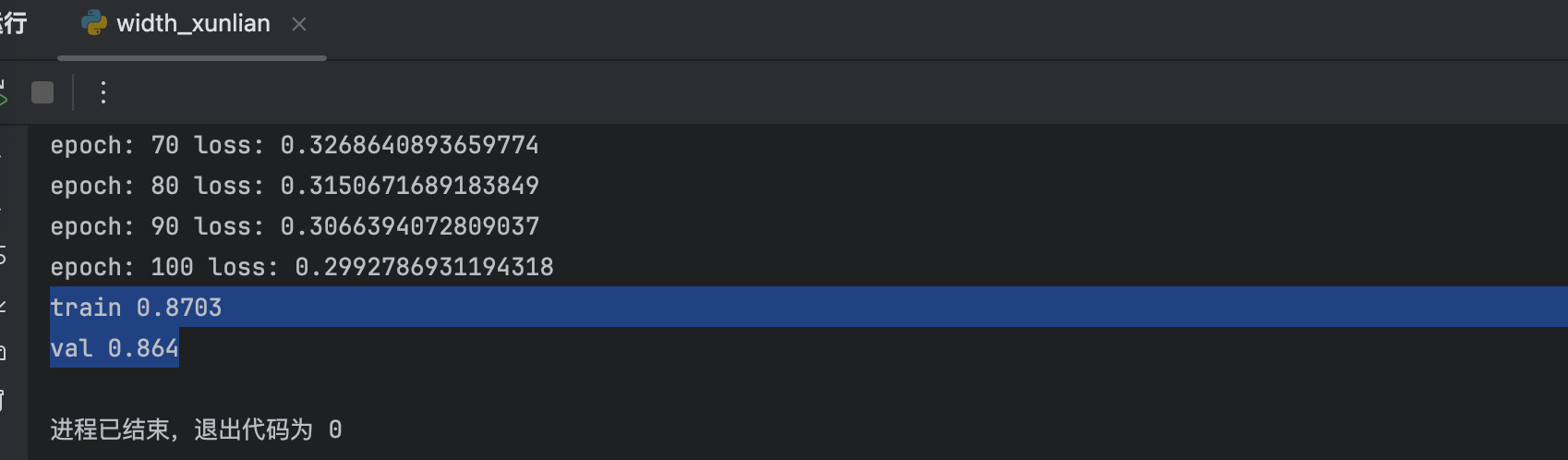

宽度优化

class Net(nn.Module):

def __init__(self,n_chanel=32):

super().__init__()

self.n_chanel=n_chanel

self.conv1 = nn.Conv2d(3, n_chanel, 3, padding=1)

self.conv2 = nn.Conv2d(n_chanel, n_chanel//2, 3, padding=1)

self.fc1 = nn.Linear(n_chanel//2 * 8 * 8, 32)

self.fc2 = nn.Linear(32, 2)

def forward(self, x):

out = F.max_pool2d(torch.tanh(self.conv1(x)), 2)

out = F.max_pool2d(torch.tanh(self.conv2(out)), 2)

out = out.view(-1, self.n_chanel//2 * 8 * 8)

out = torch.tanh(self.fc1(out))

out = self.fc2(out)

return out

没有优化是85% 优化之后到了87%

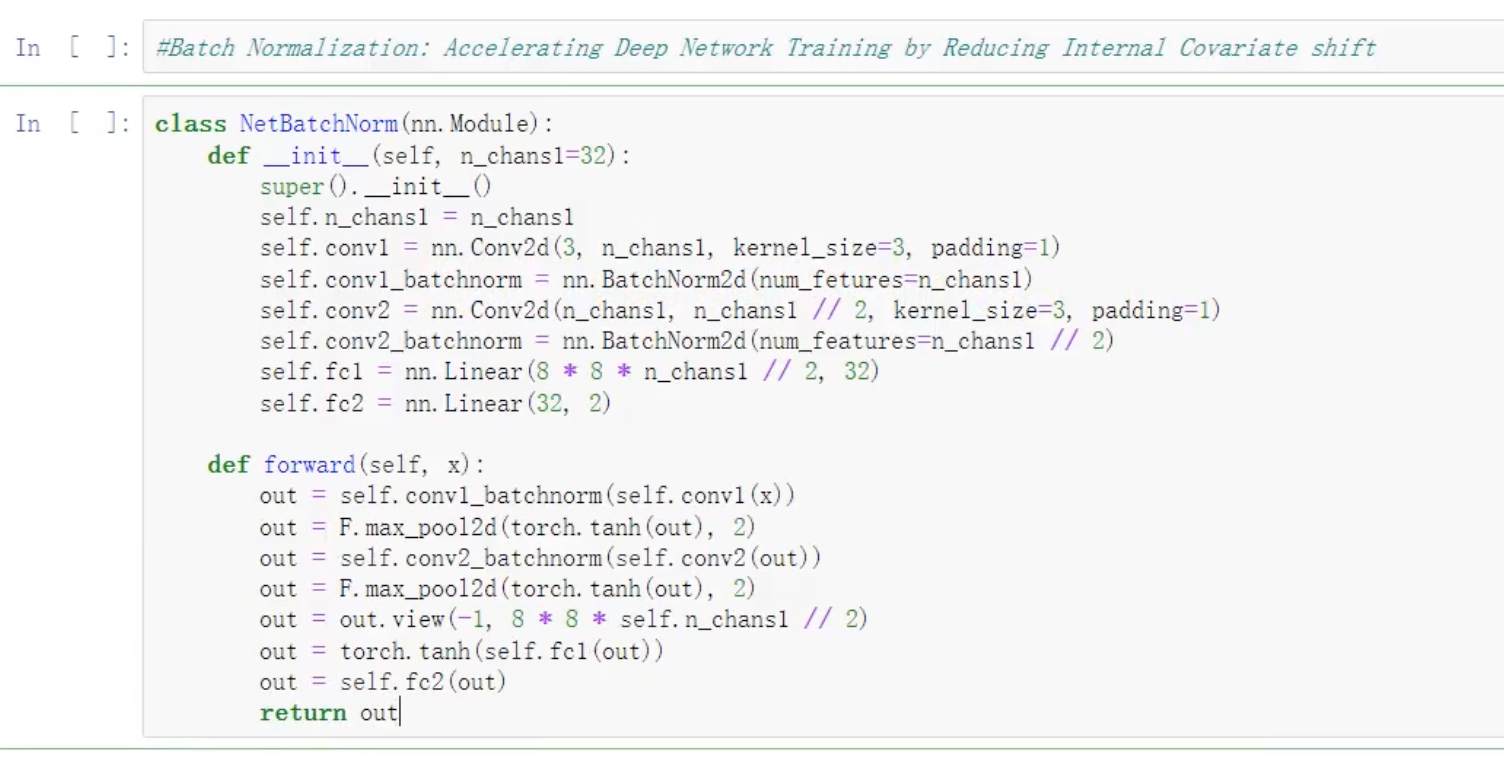

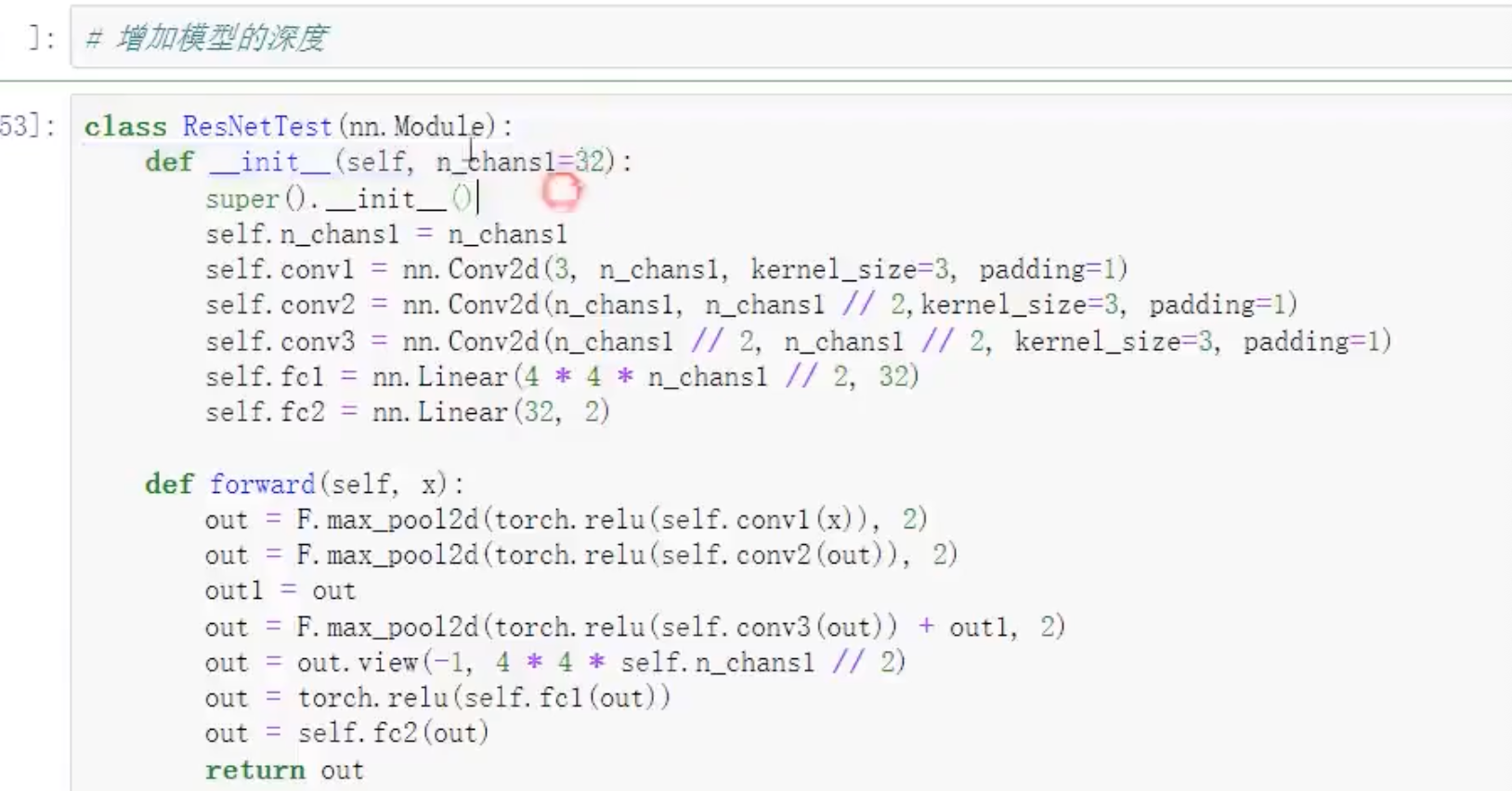

正则化

def training_loop(n_epoch,optimizer,model,loss_fn,train_loader):

for epoch in range(1,n_epoch+1):

loss_train=0

for imgs,labels in train_loader:

outputs = model(imgs)

loss = loss_fn(outputs, labels)

#正则化

lambda_12 = 0.0001

norm_12 = sum(p.pow(2.0).sum() for p in model.parameters())

loss = loss+lambda_12*norm_12

optimizer.zero_grad()

loss.backward()

optimizer.step()

loss_train += loss.item()

if epoch % 10 == 0:

print('epoch:',epoch,'loss:',loss_train/len(train_loader))dropout

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3, 16, 3, padding=1)

# dropout

self.conv1_dropout = nn.Dropout2d(0.4)

self.conv2 = nn.Conv2d(16, 8, 3, padding=1)

# dropout

self.conv2_dropout = nn.Dropout2d(0.4)

self.fc1 = nn.Linear(8 * 8 * 8, 32)

self.fc2 = nn.Linear(32, 2)

def forward(self, x):

out = F.max_pool2d(torch.tanh(self.conv1(x)), 2)

out = self.conv1_dropout(out)

out = F.max_pool2d(torch.tanh(self.conv2(out)), 2)

out = self.conv2_dropout(out)

out = out.view(-1, 8 * 8 * 8)

out = torch.tanh(self.fc1(out))

out = self.fc2(out)

return out

![[衡天云]爆款云服务器 低至12元/月](/hty.png)